Dynamic DNS has been around for a good while now, allowing users who have dynamic IPs (or even those with static IPs, no DNS, and bad memory) to use a hostname of their dynamic DNS provider to point towards their home IP.

Dynamic DNS makes it easier for a user to connect back to their home IP and interact with devices in their network. It provides a mapping between a constant hostname (e.g. cybergibbons.swanndvr.net) and your IP (82.158.226.34).

A device on your network (maybe your router, often a specific device) periodically communicates your IP to the dynamic DNS service. The domain name resolution changes as your IP changes. This means that if your IP changes, you can still connect to your home network using the constant hostname.

Simply knowing the IP is not enough – you need to be able to acutally connect to the devices. Normally a home router has a firewall set to reject nearly all incoming traffic. A user needs to punch a hole through the firewall, often using something called port-forwarding.

This exposes a device on your private network to the wider Internet. You are no longer using the security of your router’s firewall, but depending on the security of the device you have exposed.

Devices like IP CCTV cameras, network/digital video recorders, thermostats, and home automation hubs often rely on this combination of port-forwarding and dynamic DNS.

For example, a lot of Swann DVRs recommend you port-forward port 85 from your router back to your DVR. Swann then runs their own dynamic DNS service, which the DVR can be configured to communicate with.

Sounds like a great idea, doesn’t it? Users can easily connect back to their IoT devices from outside their home.

Unfortunately, dynamic DNS, especially when it is provided by deivice manufacturers, is generally a bad idea.

Why?

Finding hostnames

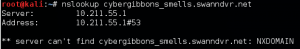

Each user that uses dynamic DNS has a unique subdomain – e.g. cybergibbons.swanndvr.net – try it using nslookup:

(no, that’s not my own IP)

Now try something that doesn’t exist:

And you can see we get no response (as long as no-one registers that domain after I publish this…)

We can do this as a bulk operation, using a large wordlist and a tool called subbrute. This tool is commonly used during the discovery phase of a pen test to find new hosts. Subbrute uses a wide array of DNS servers rather than just your own one, allowing it run quicker and with lower risk of rate limiting.

It’s important to note that the operator of swanndvr.net is highly unlikely to notice someone brute-forcing sub-domains like this. They might see a slightly higher rate than average of lookups as new DNS servers end up having to make recursive requests for their authoritive records. But the attacker’s IP will remain entirely hidden. The dynamic DNS users will see nothing at all from this attack.

The wordlist required for this application differs to a typical host wordlist. We don’t want to find ftp, dev1, vpn, etc. We want to find optus, redrover, zion, pchome, concordia. These are closer to usernames than normal hostnames. I used a custom list of usernames and hostnames built up over the last few years for this, but other sources like fuzzdb and SecLists are good starting points.

Running subbrute against swanndvr.net quickly got me a list of 2401 valid hostnames. I’m sure a longer wordlist would reveal more hostnames, but 2401 is enough for this.

Scanning hosts for webservices

Given that swanndvr.net is intended to be used by people who own Swann products, I though I would concentrate on ports that Swann products commonly use. This includes the typical port 80 (HTTP), port 443 (HTTPS), but also 85 (a lot of Swann products run HTTP on this port).

Let’s fire up nmap:

nmap -vv -Pn -iL swann_hosts.txt -T5 -p80,85,443 --open -oA nmap_swann | tee nmap_swann.txt

- -vv – verbose output

- -Pn – don’t ping check host first

- -iL swann_hosts.txt – take this file as the input

- -T5 – scan fast

- -p80,85,443 – scan ports 80, 85 and 443

- –open – only show open ports

- -oA nmap_swann – output in greppable, nmap and xml files

- tee nmap_swann.txt – console to a text file

This scan will run quickly – we are only trying three ports on (mainly) consumer routers, so there is little risk of anything clamming up with the fast scan.

After this, we have 335 hosts running something on one of these three ports. That’s a lot of hosts to check manually. I won’t post the results here as it is likely transient.

Screengrabbing hosts

Luckily there is a tool that is designed to take a list of hosts/services and grab a screenshot of each one. It’s called peepingtom. It uses a library called PhantomJS to render the webpages, save a screenshot and the source, and present it in a nice HTML file. It also works with nmap output files.

At the moment, there are no binaries available for PhantomJS, so you will need to build it yourself under Linux. This takes quite a while.

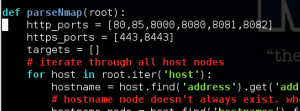

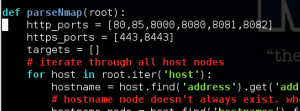

Running peepingtom is very simple, but it doesn’t, by default, treat port 85 as HTTP. We need to edit the peepingtom.py file. In the function parseNmap, add 85 to the http_ports:

Now we run peepingtom:

./peepingtom.py -x nmap_swann.xml

It will take quite a while to run. Peepingtom won’t do anything complex with JavaScript, redirects, Flash etc. so some of the results will be basic, but it’s easy enough to confirm the interesting results in a browser.

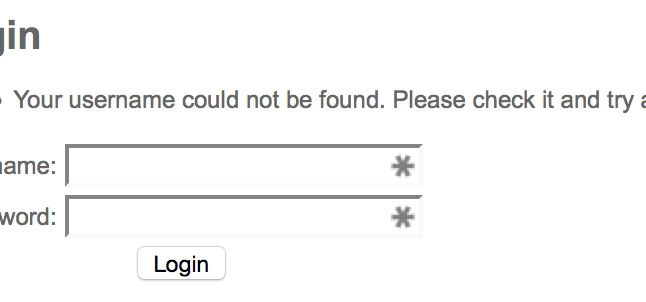

What do we find?

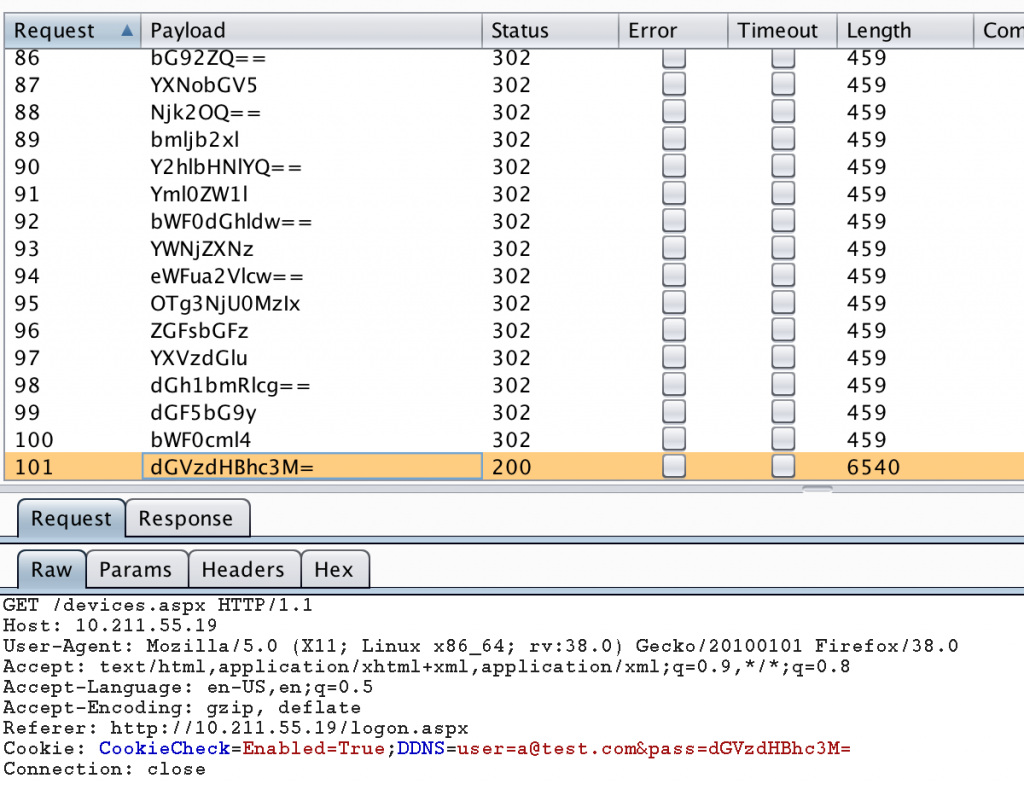

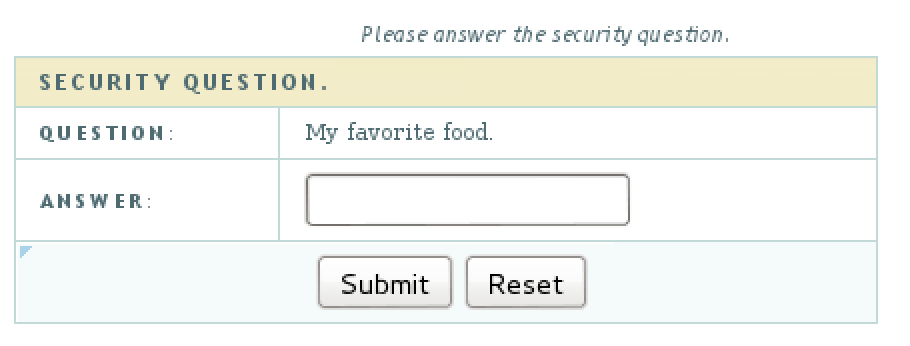

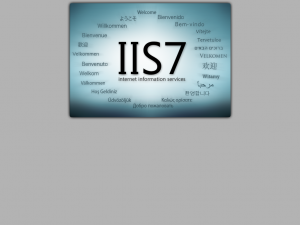

Lots of IIS, generally vulnerable to MS15-034 (27/335 hosts running IIS, 21 vulnerable). I guess people into DVRs like tinkering with IT and forgetting about what they are running.

Lots of DVRs, which may (or may not) use default (or no) credentials.

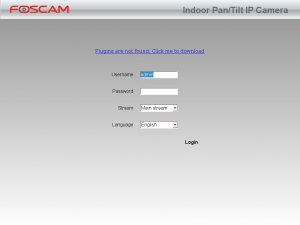

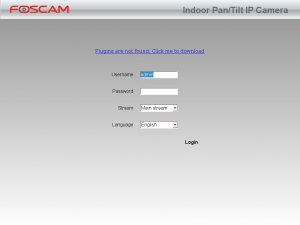

Some IP cameras:

Lots and lots of modems and routers, some with no login credentials at all.

In fact, it looks like about 85 hosts are running DVRs, and about 30 of these have an easy to exploit vulnerability that I found a few months back (responsible disclosure ongoing…) that results in root access to a fairly powerful embedded Linux box.

Scanning hosts for other typically insecure services

Going back for another nmap scan, this time on 21 (FTP), 22 (SSH), 23 (Telnet) and 25 (SMTP), we find even more hosts running services. Telnet is very popular.

Conclusion

So what is the issue here?

Dynamic DNS gives me a very easy way of identifying hosts that run multiple, likely insecure services/devices including those made by specific manufacturers.

These IoT devices nearly always have some vulnerabilities and very rarely receive any firmware updates to fix them.

It’s like a big flag shouting “hack me”!

I could take over 30 DVRs just from this small amount of work and use them for whatever I want.

It would take me much longer to scan the entire IPv4 address space to find these specific devices.

What’s the solution? Stop relying on port-forwarding to allow connectivity to your devices. If you need to, make them secure and don’t allow default credentials!