Today, CERT/CC will be disclosing a series of vulnerabilities I have discovered in one particular alarm signalling product made by CSL Dualcom – the CS2300-R. These are:

- CWE-287: Improper Authentication – CVE-2015-7285

- CWE-327: Use of a Broken or Risky Cryptographic Algorithm – CVE-2015-7286

- CWE-255: Credentials Management – CVE-2015-7287

- CWE-912: Hidden Functionality – CVE-2015-7288

The purpose of this blog post is to act as an intermediate step between the CERT disclosure and my detailed report. This is for people that are interested in some of the detail but don’t want to read a 27-page document.

First, some context.

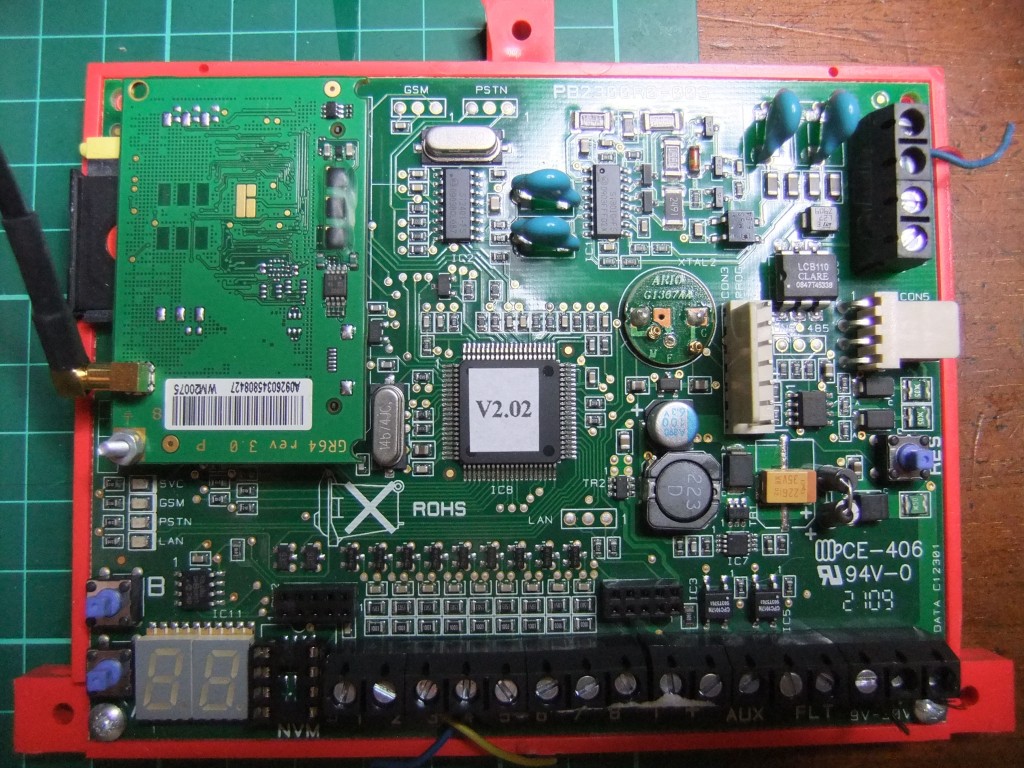

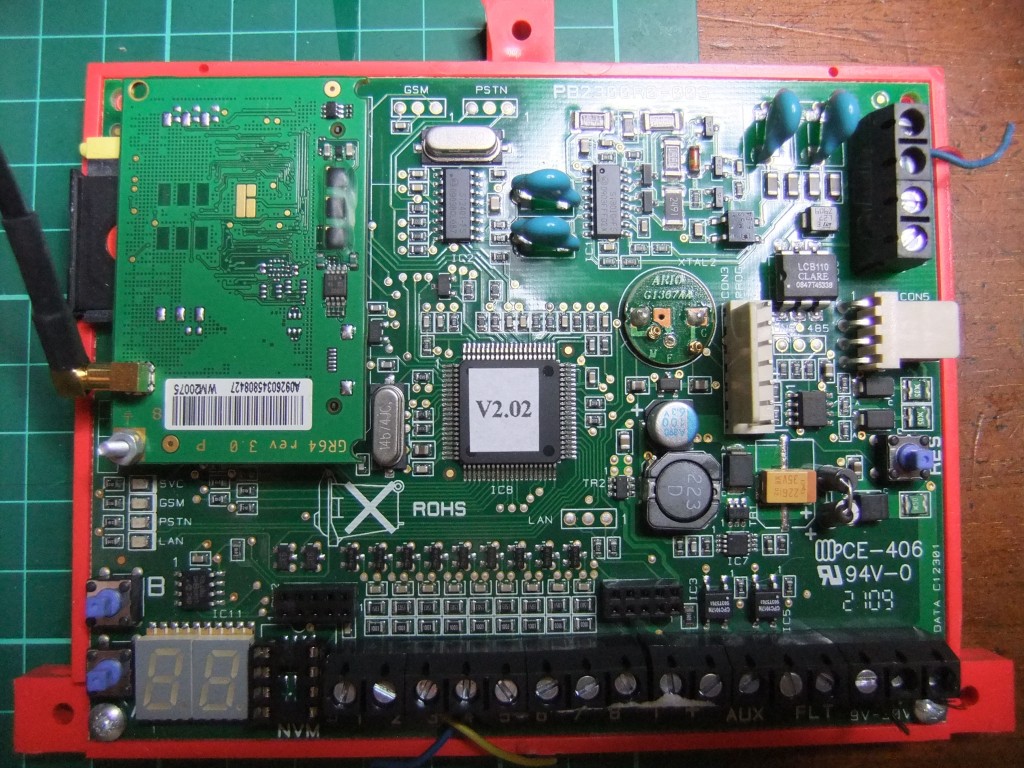

What are these CSL Dualcom CS2300-R devices? Very simply, they are a small box that sits between an intruder alarm and a monitoring centre, providing a communications link. When an alarm goes off, they send a signal to the monitoring centre for action to be taken. They can send this over a mobile network, normal phone lines, or the Internet.

They protect homes, shops, offices, banks, jewellers, data centres and more. If they don’t work, alarms may not reach the monitoring centre. If their security is poor, thousands of spoofed alarms could be generated. To me, it is clear that the security of these devices must be to a reasonable standard.

I am firmly of the opinion that the security of the CS2300-R devices is very poor. I would not recommend that new CSL Dualcom signalling devices are installed (regardless of model), and I would advise seeking an alternative provider if any were found on a pen-test. This is irrespective of risk profile of the home or business.

If you do use any Dualcom signalling devices, I would be asking CSL to provide evidence that their newer units are secure. This would be a pen-test carried out by an independent third-party, not a test house or CSL.

What are the issues?

The report is long and has a number of issues that are only peripheral to the real problems.

I will be clear and honest at this point – the devices I have tested are labelled CS2300-R. It is not clear to myself or others if these are the same as CS2300 Gradeshift or any other CS2300 units CSL have sold. It is also not clear which firmware versions are available, or what differences between them are.

The devices were tested in the first half of 2014.

CSL have not specifically commented on any of the vulnerabilities. On the 20 November they finally made a statement to CERT.

Here is a summary of what I think is wrong.

1. The encryption is fundamentally flawed and badly implemented

The encryption cipher used by the CSL devices is one step above the simple Caesar Cipher. The Caesar Cipher is known and used by many children to encrypt messages – each character is shifted up or down by a known amount – the “key”. For example, with the key of “4”, we have:

Plain text: THE QUICK BROWN FOX JUMPED OVER THE LAZY DOG

Key: 444 44444 44444 444 444444 4444 444 4444 444

Cipher text: XLI UYMGO FVSAR JSB NYQTIH SZIV XLI PEDC HSK

CSL’s encryption scheme goes one step further, and uses a different shift as you move along the message. It’s a hybrid between a shift cipher and a polyalphabetic substitution cipher.

The mechanism the algorithm uses is like so:

Plain text: THE QUICK BROWN FOX JUMPED OVER THE LAZY DOG

Key: 439 83746 97486 128 217218 9217 914 9127 197

Cipher text: XKN YXPGQ KYSET GQF LVTRFL XXFY CII UBBF EXN

The first character is shifted up by 4, the next by 3, then 9, 8, 3 etc. This is simplified, but not by much.

Here it is as a simple Python script:

iccid = "89441000300637117619"

chipNumber = "510021"

status = "15665555555555567"

# This is the key stored in flash at x19da

keyString = "0f15241e0919030d2a050e2329132c1014171b2726020c072201212d1a1c120a281f0b1d04250f1816112e2b2006082f41542a49503d"

# Change the string into a list of integers

key = [ord(x) for x in keyString.decode("hex")]

# encrypts a string with a startingVariable

def encrypt(stringToEncrypt, startingVariable):

if startingVariable < 52:

startingVariable -= 1

else:

startingVariable -= 51

encryptedString = ""

for y in stringToEncrypt:

y = ord(y)

# Input character constraint

if y < 0x41:

y -= 0x30

else:

y -= 0x37

# Add value from key to character

y += key[startingVariable]

# Output constraints

if y < 0x25:

if y < 0x1B:

y += 0x40

else:

y += 0x15

else:

y += 0x3C

startingVariable += 1

# Keep startingVariable within bounds - oddly smaller bounds than initial check

if startingVariable > 47:

startingVariable = 0

encryptedString += chr(y)

return encryptedString

def decrypt(stringToDecrypt, startingVariable):

if startingVariable < 52:

startingVariable -= 1

else:

startingVariable -= 51

decryptedString = ""

for y in stringToDecrypt:

y = ord(y)

if y < 0x61:

if y < 0x41:

y -= 0x15

else:

y -= 0x40

else:

y -= 0x3C

y -= key[startingVariable]

if y < 0x0a:

y += 0x30

else:

y += 0x37

startingVariable += 1

if startingVariable > 47:

startingVariable = 0

decryptedString += chr(y)

return decryptedString

stringStatus = iccid + "A" + chipNumber + status

startingVariable = 52

encryptedString = encrypt(stringStatus, startingVariable)

print " Status: %s" % stringStatus

print "Encrypted: %s" % encryptedString

It would be fair to say that this encryption scheme is very similar to a Vigenère cipher, first documented in 1553, even used fairly widely until the early 1900s. However, today, even under perfect use conditions, the Vigenère cipher is considered completely broken. It is only used for teaching cryptanalysis and by children for passing round notes. It is wholly unsuitable to use for electronic communications across an insecure network.

Notice that I said the Vigenère cipher was broken “even under perfect use conditions”. CSL have made some bad choices around the implementation of their algorithm. The cipher has been abused and is no longer in perfect use conditions.

An encryption scheme where the attacker knows the key and the cipher is totally broken – it provides no protection.

And CSL have given away the keys to the kingdom.

The key is the same for every single board. The key cannot be changed. The key is easy to find in the firmware.

An encryption scheme where the attacker knows the key and the cipher is completely and utterly broken.

Beyond that, CSL make a number of elementary mistakes in the protocol design. Even if the key is not known and fixed, it could easily be recovered from observing a very limited number of sent messages. The report details some of these mistakes. They aren’t subtle mistakes- they are glaring errors and omissions.

I cannot stress how bad this encryption is. Whoever developed it doesn’t even have basic knowledge of protocol design, never mind secure protocol design. I would expect this level of work to come from a short coursework from A-level IT students, not a security company.

2. Weak protection from substitution

The CS2300-R boards use two pieces of information to identify themselves. One is the 20-digit ICCID – most people would know this as the number on a SIM card. The other is a 6-digit “chip number”.

Both of these are sent in each message – the same message which we can easily decrypt. This leads to an attacker being able to easily determine the identification information, which could then be used to spoof messages from the device.

Beyond that, installers actually tweet images of the boards with either the ICCID or chip number clearly visible: 1, 2, 3.

This is a very weak form of substitution protection. There are many techniques which can be used to confirm that an embedded device is genuine without using information sent in the open, such as using a message authentication code or digital signature.

3. Unable to perform firmware updates over-the-air

It is both presumptuous and foolhardy to assume that the device you deploy on day one will be free of bugs and security issues.

This is why computers, phones, routers, thermostats, set-top boxes, and even cars, allow for firmware updates over-the-air. Deployed devices can be updated in the field, after bugs and vulnerabilities have been fixed.

It has been considered – for many years – that over-the-air firmware updates are an absolutely vital part of the security of any connected embedded system.

The CS2300-R boards examined have no capability for firmware update without visiting the board with a laptop and programmer. No installers that I questioned own a programmer. The alarm system needs to be put into engineering mode. The board needs to be removed from the alarm enclosure (and, most of the time it is held in with self-adhesive pads, making this awkward). The plastic cover needs to be removed. The programmer needs to be connected, and then the firmware updated. Imagine doing that for 100 boards, all at different sites.

At this point, we need to remember that CSL claim to have over 300,000 deployed boards.

If we imagine that it takes a low estimate of 5 minutes to update each of the 300,000 boards, that is over 1000 man-days of effort to deploy an update. If you use a more realistic time estimate, the amount of effort becomes scary.

This means that any issues found cannot and will not be fixed on deployed boards.

CSL have confirmed that none of their devices support over-the-air firmware updates.

CSL have given away the keys to the kingdom and cannot change the locks.

A software development life-cycle that has a product that cannot be updated fosters a do-not-care attitude. Why should CSL care about vulnerabilities if they cannot fix them? What would be their strategy if there was a serious, remotely exploitable vulnerability was found?

4. I do not believe the CS2300-R boards are standards compliant

One of the standards governing alarm signalling equipment is EN50136. These are a series of lengthy documents that are unfortunately not open access.

Only a small part actually discusses encryption and integrity. Even when they are discussed, it is in loose terms.

CSL have stated, in reference to my report:

As with all our products, this product has been certified as compliant to the required European standard EN-50136

Unfortunately, CSL will not clarify which version of the standards the CS2300-R devices have been tested to.

I will quote the relevant section from EN50136-1-5:2008:

To achieve S1, S2, I1, I2 and I3 encryption and/or hashing techniques shall be used.

When symmetric encryption algorithms are used, key length shall be no less than 128 bits. When other algorithms are deployed, they shall provide similar level of cryptographical strength. Any hash functions used shall give a minimum of 256 bits output. Regular automatic key changes shall be used with machine generated randomized keys.

Hash functions and encryption algorithms used shall be publicly available and shall have passed peer review as suitable for this application.

These security measures apply to all data and management functions of the alarm transmission system including remote configuration, software/firmware changes of all alarm transmission equipment.

There are newer versions of the standard, but they are fundamentally the same.

My interpretation of this is as follows:

Cryptography algorithms used should be in the public domain and peer reviewed as suitable for this application.

We are talking DES, AES, RSA, SHA, MD5. Not an algorithm designed in-house by someone with little to no cryptographic knowledge. The cryptography used in the CS2300-R boards examined is unsuitable for use in any system, never mind this specific application.

When the algorithm was sent as a Python script to one cryptographer, they assumed I had sent the wrong file because it was so bad.

The next I sent it to said:

I’ve never thought I’d see the day that someone wrote a variant on XOR encryption that offered less than 8 bits of security, but here we are.

We’re talking nanosecond scale brute force.

Key length should be 128 bits if a symmetric algorithm is used.

A reasonable interpretation of this requirement is that the encryption should provide strength equivalent to AES-128 from brute-force attacks. AES-128 will currently resist brute-force for longer than the universe has existed. This is strong enough.

The CSL algorithm is many orders of magnitude less secure than this. Given the fixed mapping table, a message can be decrypted in the order of nanoseconds.

Regular automatic key changes shall be used with machine generated randomised keys

Regular is obviously open to interpretation. There is a balance to be struck here. You need to change keys often enough that they cannot be brute-forced or uncovered. But key exchange is a risky process – keys can be sniffed and exchanges can fail (resulting in loss of communications).

Regardless, the CS2300-R boards have no facility at all for changing the keys. The key is in the firmware and can never change.

All data and management functions including remote configuration should be protected by the encryption detailed.

The CS2300-R have a documented SMS remote control system, protected by a 6-digit PIN. This is not symmetric encryption, this is not 128-bit, this is not peer-reviewed.

This is a remote control system protected by a short PIN (and it seems that PIN is often the same – 001984 – and installers don’t have the ability to change it).

Data should be protected from accidental or deliberate alteration

There is nothing in the protocol to protect against alteration. There is no MAC, no signature. Nothing. Not even a basic checksum or parity bit.

The message can easily be altered by accident or by malice. Look at this example:

Status: 89441012345678901237A1234561111111111111117

Encrypted: 2i7MZCNhHRdkZpYTX2fiLMIaEbo02SKe5L3EbPYWRkn

Shift: 0000000000000000000000000004444444444444440

Altered: 2i7MZCNhHRdkZpYTX2fiLMIaEbo46WOi9P7IfT20Von

Decrypted: 89441012345678901237A1234565555555555555557

By adding four to a character in the encrypted text, we add four to the decrypted character. This means that the message content has been altered significantly, and very easily. The altered part of the message is the alarm status.

I can see no way that these units are compliant with this standard. CSL, however, say they are certified.

5. I do not believe third-party certification is worthwhile

CSL has obtained third-party certification for CS2300 units. When I first heard this, I was astonished – how is it compliant with the standard?

It’s still not actually clear which units have been tested. The certificate says CS2300, my units say CS2300-R, but CSL say that the units I have looked at have been tested.

After meeting with the test house I have an idea of what happened here.

The test house would not discuss the CS2300 certification specifically, due to client confidentiality. They did discuss the EN50136 standard and testing in general.

Firstly, I was not reassured that the test house has the technical ability to test any cryptographic or electronic security aspects of the standard. Their skills do not lie in this area. At a number of points during the meeting I was surprised about their lack of knowledge around cryptography.

Secondly, there are areas of standards where a manufacturer can self-declare that they are compliant. The test house expected, if another unit was to be tested, the sections on encryption and electronic security would be self-declared by the manufacturer. Note that the test house can still scrutinise evidence around a self-declaration.

Thirdly, there is no way for a third-party to see any detail around the testing without both the manufacturer and test house agreeing to release the data. To everyone else, it’s just a certificate.

From this, I can infer that the CS2300 – and probably other signalling devices, even from other manufacturers – have not actually had the encryption or other electronic security tested by a competent third-party.

I don’t feel that this is made clear enough by either manufacturers or test houses.

6. I do not think the standard is strict enough

I acknowledge that the standard must cover a range of devices, of different costs, protecting different risks, and across the EU. It must be a lot of work drawing up such a standard.

Regardless of this, the section on encryption and substitution protection is so wishy-washy that it would be entirely possible to build a compliant device that had gaping security holes in it.

Encryption, by itself, is not enough to maintain the security of a system. This is widely known in the information security and cryptography world. It’s perfectly possible to chain together sound cryptographic primitives into a useless system. There is nothing in the standard to protect against this.

7. CSL do not have a security culture

There are so many issues with the CS2300-R system it is almost unbelievable.

Other aspects of CSL’s information security are also similarly weak; leaking their customer database, no TLS on the first revision of their app, an awful apprenticeship website, no TLS on their own shop, misconfiguration of TLS on their VPN server, letting staff use Hotmail in their network operations centre… it goes on.

(It is worth noting that CSL added TLS to their shop and fixed the VPN server after I blogged about them a few weeks ago – why does it take blog posts before trivially simple issues are fixed?)

CSL do not have a vulnerability disclosure policy. It was clear that CSL did not know how to handle a vulnerability report.

CSL have refused to discuss any detail without a non-disclosure agreement in place.

There is no evidence that CSL’s security has undergone any form of scrutiny. Even a rudimentary half-day assessment would have picked up many of the issues with their website.

There is also a degree of spin in their marketing and sales. A number of installers and ARCs questioned believe that the device forms a VPN to CSL’s server. Some also believe that the device uses AES-256. Indeed, their director of IT, Santosh Chandorkar claimed to me that the CS2300-R formed a VPN with their servers. There is no evidence in the firmware to support any of these claims, but there is also no way for a normal user to confirm what is and isn’t happening.

At a meeting, Rob Evans inferred that it would be my fault should these issues be exploited after I released them. He used the example of someone getting hurt on a premises protected by their devices. It obviously would not the fault of the company that developed the system.

At one point, when raised on a forum, someone claiming to be a family friend of Rob Evans, accused me of hacking his DVR and spying on his kid, whilst at the same time attempting to track me down and make threats of violence. The same person has boasted about this on other forums.

I have asked Rob Evans to confirm or deny if he knows this person. As of today, I have had no response.

Another alarm installer going by the handle of Cubit is repeatedly stating that I am attempting to extort money from security manufacturers:

Not when he tries to hold a company to ransom, no!

Remember reading his article about the (claimed) flaws in the <redacted> product?? No, thought not. They paid to keep him quiet.

Oddly, the MD of the same company came along to state that this wasn’t the case.

I think it’s disturbing that, rather than pay attention to potential issues, defenders of CSL act like this.

And there is this gem from Simon Banks, managing director of CSL Dualcom:

IP requires elaborate encryption because it sends data across the open Internet. In my 25 years’ experience I’ve never been aware of a signalling substitution or ‘hack’, and have never seen the need for advanced 128 bit encryption when it comes to traditional security signalling.

No need for 128 bit encryption, Simon. Only the standard.

Conclusion

The seven issues to take away from this are:

- CSL have developed incredibly bad encryption, on a par with techniques state-of-the-art in the time before computers.

- CSL have not protected against substitution very well

- CSL can’t fix issues when they are found because they can’t update the firmware

- There seems to be a big gap between the observed behaviour of the CS2300-R boards and the standards

- It’s likely that the test house didn’t actually test the encryption or electronic security

- Even if a device adheres to the standard, it could still be full of holes

- CSL either lack the skill or drive to develop secure systems, making mistake after mistake

What do I think should happen as a result of this?

- All signalling devices should be pen-tested by a competent third-party

- A cut-down report should be available to users of the devices, detailing what was tested and the results of the testing

- The standards, and the standards testing, needs to include pen-testing rather than compliance testing

- The physical security market needs to catch up with the last 10 years of information security

Rebuttals

CSL have made some statements about this.

This only impacts a limited number of units

CSL have stated:

Of the product type mentioned in his report there are only around 600 units in the field

What product type mentioned? Units labelled CS2300-R? Speaking to installers, the CS2300-R seems to be incredibly common.

If it is only a subset of units labelled CS2300-R, how does a user work out which ones are impacted?

The other 299,400 devices may not be the same unit, but how do they differ? Has a competent third-party tested the encryption and electronic security?

We have done an internal review

CSL have stated:

Our internal review of the report concluded there is no threat to these systems

Ask yourself this: if someone has deployed a system with this many issues in it, why should you trust their judgement as to the security of the system now? Are they competent to judge? There is no evidence that they are.

They have been third-party tested

CSL have stated, specifically in reference to my report:

As with all our products, this product has been certified as compliant to the required European standard EN-50136

This worries me. This says that the very device I have examined – the one full of security problems – got past EN-50136 testing. If this device can pass, practically anything can pass.

But I am fairly sure that the standards testing essentially allows the manufacturer to complete the exercise on paper alone.

The devices are old

The product tested was a 6 year old GPRS/IP Dualpath signalling

unit.

Firstly, there are at least 600 of these still in service.

Secondly, when the research was carried out, the boards were 4.5 years old.

Thirdly, does that mean that a 6 year old product is obsolete? Does that mean they don’t support it any more?

The threat model isn’t the one we are designed for

This testing was conducted in a lab environment that isn’t

representative of the threat model the product is designed to be implemented in

line with. The Dualpath signalling unit is designed to be used as part of a

physically secured environment with threat actors that would not be targeting

the device but the assets of the device End User.

This seems to have been a sticking point with some of the more backwards members of the security industry as well.

The reverse engineering work was done in a lab. As with nearly all vulnerability research, there needs to be a large initial investment in time and effort. Once vulnerabilities have been found, they can be exploited outside of the lab environment.

If the threat actors aren’t targeting the device, why bother with dual path?

Again, it doesn’t look like the devices comply with the standards. This is what counts.

They aren’t remotely exploitable

No vulnerabilities were identified that could be exploited remotely via

either the PSTN connectivity or GPRS connection which significantly reduces the

impact of the vulnerabilities identified.

I disagree with this. CSL and a number of their supporters do not seem to want to accept that GPRS data can no longer be classed as secure.

This still leaves the gaping holes on the IP side. When I met CSL at IFSEC 2014, they strongly implied that the number of IP units they sold was negligible. There seem to be more than a few getting installed though.

The price point is too low

The price point for the DualCom unit is £200 / $350. CSL DualCom also

have devices in their portfolio that are tamper resistant or tamper evident to

enable customers to defend against more advanced or better funded threat

actors. Customers are then able to spend on defence in line with the value of

their assets.

I’m not sure why the price is relevant. Are CSL saying it’s too cheap to be properly secure?

I can’t find any of these tamper resistant or tamper evident devices for sale – it would be interesting to see what they are.

Very few of the issues raised involve physically tampering with the device. They are generally installed in a protected area.

These aren’t problems, but we are releasing a product that fixes the issues

If customers are concerned about the impact of these vulnerabilities CSL are

releasing a new product in May which addresses all of the areas highlighted.

So on one hand, these vulnerabilities aren’t issues, but they are issues enough that you’ve developed a new product to fix them? Righty ho.

Firmware updates are vulnerable, but not normal communications

CSL products are not remotely patchable as we believe over the air updates

could be susceptible to compromise by the very threat actors we are defending

against.

What?

Just a few paragraphs ago, you say that you are not protecting against the kind of threat actor that can carry out attacks as in the report. But you are protecting against a threat actor that can intercept firmware updates?

Why allow critical settings to be changed over SMS if this is an issue?

What-if rebuttals

These are things that haven’t been directly stated by CSL or others, but I suspect that people will raise them

These issues are not being exploited

During discussions with CSL, they seemed very focused on what has happened in the past. I had no evidence of attacks being carried out against their system, and neither did they. Therefore, in their eyes, the vulnerabilities were not an issue.

This is an incredibly backwards view of security. The idea of a botnet of DVRs mining cryptocurrency would have seemed ridiculous 5 years ago. The idea of a worm, infecting routers and fixing security problems even more so. The Internet changes. The attackers change. Knowledge changes.

Failing to keep up with these changes has been the downfall of many systems.

But we haven’t detected any issues

This entirely misses the point.

The end result of these vulnerabilities is that it is highly likely that a skilled attacker could spoof another device undetected.

We don’t mind issues being brought to us privately

These issues were brought to CSL’s attention, privately, 17 months ago.

That is ample time to act.

He works for a competitor

Firstly, I don’t. I have spoken to competitors to find out how they work.

Secondly, this would not detract from the glaring holes in the system.

He is blackmailing people in the security industry

I have released vulnerabilities in Visonic and Risco products. Shortly, there will be a vulnerability in the RSI Videofied systems. None of these people have been asked for payment and have been given 45+ days to respond to issues. This is a fair way of disclosing issues.

I do paid work with others in the security industry. Again, at no point has payment been requested to keep issues quiet.

I have never asked CSL for payment. At several points they have asked to work with me, which I have turned down as I don’t think their security problems are going to be resolved given their culture.

The encryption and electronic security are adequate

It’s hard to explain (to someone outside of infosec) just how bad the encryption is. It is orders of magnitude less strong than encryption used by Netscape Navigator in 2001.

The problems found have been widely known for 20+ years, and many are easy to protect against. Importantly, it appears that their competitors – at least WebWayOne and BT Redcare – aren’t making the same mistakes.

The GPRS network is secure

This was true 15 years ago. It is now possible – cheaply and easily – to spoof a cell site and then intercept GPRS communications. You cannot rely on the security of the GRPS network alone.

Further to this, exactly the same protocol is used over the Internet.

But above all, the standards don’t differentiate between GPRS and the Internet – they are both packet switched networks and must be secured similarly.

We take our customers security seriously

So does every other company that has been the subject of criticism around security.

I would argue that letting your customer database leak is not taking security seriously.