If you have worked with embedded systems, you will have come across terms like checksums, hashing, password hashes, and CRCs. Whilst checksums and hashes are two distinct terms, they are often used for similar purposes. They are also often used incorrectly.

What is a checksum?

A checksum is a short piece of data derived from a longer block of data, generally for the purpose of detecting errors that have been introduced. If the checksum does not match the data, then something has gone wrong.

They can be used to ensure data integrity i.e. has this data been accidentally modified? Good checksums mean that it is highly unlikely that the data could be changed and the checksum could also be accidentally changed to be valid.

They cannot be used to ensure data authenticity i.e. has someone maliciously altered this data? If an attacker can modify the data, they can recalculate the checksum so that changes cannot been detected.

Message: The quick brown fox jumped over the lazy dog.

Checksum: 82a34642

Message: The quick brown cat jumped over the lazy rat.

Checksum: 0319c205There are many common forms of checksum.

Check digits are added to numbers all around us. Credit card numbers, IMEI numbers for phones, VIN numbers on cars. These are generally designed to detect human errors, such as off-by-one (3->4) and transposition (54->45).

Parity bits are used in communications. The number of 1s in the data are counted. If it’s an even number of 1s, the parity bit is 0. If it’s an odd number of 1s, the parity bit is 1. The receiver simply needs to sum all the bits including parity together. If it’s not an even number of 1s, then an error has been introduced. Clearly this has issues – if two bits are flipped, the error may not be noticed!

Modulo checksums are used frequently in custom radio frequency protocols and wire protocols. You sum up the value of each byte sent, then perform a modulo 256 operation i.e. what’s the remainder when divided by 256.

If we want to send the following data:

45 67 AB 43 23 98 FD E1We sum each byte to get 0x433 – too big to fit in a single byte! So we do “modulo 0x100”, leaving us with a remainder of 0x33. Generally, we take what is called the “two’s complement” (0x100 – 0x33 -> 0xCD) of that and append it. Why? Because now if we add all the bytes together and do modulo 0x100, we should get 0 if all is well.

Cyclic Redundancy Checks (CRCs) are more complex but found everywhere around us. There are hundreds of common variants, with different lengths – CRC-8, CRC-16, CRC-32 – and different “polynomials” – a set of coefficients used. Although they have all these variations and can be more challenging to reverse engineer, this does not mean they can prove data is authentic.

Keeping a Checksum the same

There are situations where an attacker cannot modify both the data and the checksum. For example, if the checksum of a firmware image is displayed on the manufacturer’s website, and the attacker wants to modify the firmware.

The issue with the checksums mentioned above is that it is nearly always possible to modify the data and keep the checksum the same.

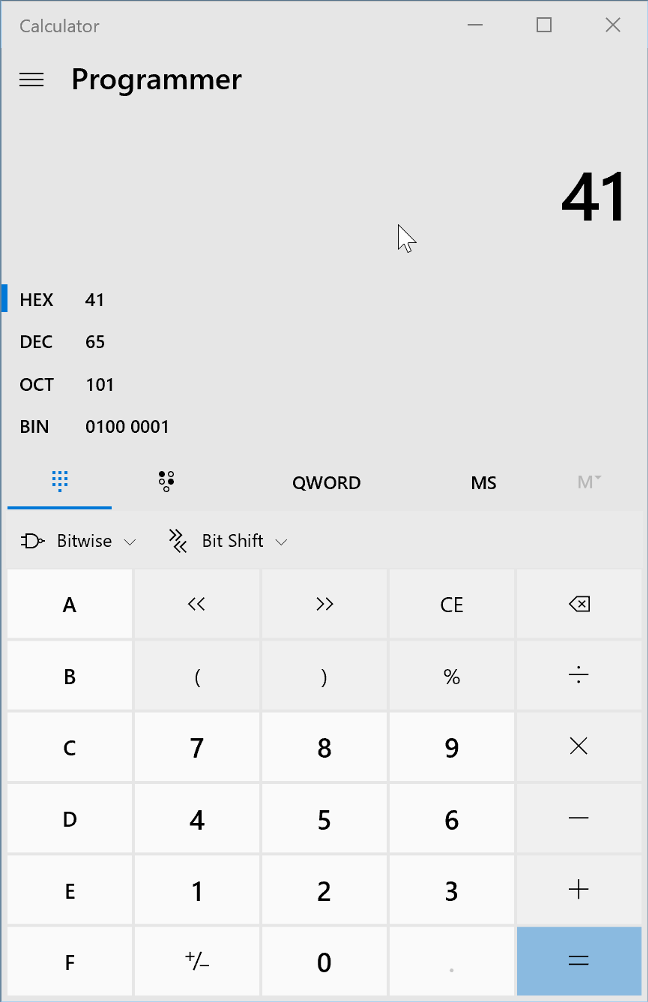

With the modulo checksum, this is trivial. If one byte is increased by 1, another byte must be reduced by 1 to maintain the same checksum. This can be really easily shown with ASCII text. Changing a letter’s case adds or subtracts 0x20 from the value. A is 0x41, a is 0x61. 8 times 0x20 is 0x100, so change the case of 8 characters, and the modulo checksum doesn’t change!

Message: I really like bananas very much.

Checksum: 74

Message: I really LIKE bananas VERY much.

Checksum: 74It’s not as easy with CRCs, but by modifying any 4 consecutive bytes in a file, the checksum can be kept at the same value. This does mean additional bytes need to be changed, but these can often be squeezed into padding or irrelevant text.

Message: I really like bananas. This bit is not important.

CRC-32: 8D00795D

Message: I really HATE bananas. This biN�`Z not important.

CRC-32: 8D00795DWhat is a hash?

A hash function is a deterministic function that maps an arbitrary sized piece of data to a fixed-sized piece of data.

Technically, the checksums mentioned above are actually hash functions – you can provide any length of input to parity or a modulo checksum, and get a fixed length output.

However, in security, we are generally talking about something called a cryptographic hash function.

You will probably be familiar with some of the common ones: MD5 and SHA are the most well known.

First, I’d like to talk about one of the inherent qualities of a hash function called the pigeonhole principle. If you have 9 pigeonholes, and 10 pigeons, then at least one of the pigeonholes must contain more than 1 pigeon.

Because a hash function maps something of arbitrary length into something of fixed length, there will always be multiple inputs that result in the same output. There are infinite numbers of arbitrary length inputs, and only a finite number of outputs.

As a result, collisions exist – two different inputs that result in the same output.

An ideal cryptographic hash function should hold certain properties.

It must be deterministic – if you provide it with the same input twice, you should get the same output. This one seems fairly obvious – if the purpose of the hash function is to detect errors or similar, it must always behave the same.

password

5f4dcc3b5aa765d61d8327deb882cf99

password

5f4dcc3b5aa765d61d8327deb882cf99It should be quick to calculate the hash. Remember how it works for arbitrary data size? You should be able to quickly hash a short sentence or the entire contents of Wikipedia.

It should be infeasible to determine a message that yields a given hash value. This is given the fancy name of “preimage resistance“.

4b6a6c37c7f8777a247fc727dbd2658d

????It should be infeasible to determine two messages that yield the same hash value. This is given the fancy name of “collision resistance“. We know that collisions will exist, but they should not be easy to find.

A small change in the input should result in a large change in the output. This is called the “avalanche effect“. This makes sense later when we consider some of the use cases.

password

5f4dcc3b5aa765d61d8327deb882cf99

Password

dc647eb65e6711e155375218212b3964Uses

So what do you use these cryptographic hash functions for?

Error Detection

Similar to checksums, hashes can be used to detect errors or changes in data.

Exactly as with checksums, if an attacker can modify both the data and hash, there is no way to determine if the data has been changed. Hashes are not safe against an active attacker, only accidental modification.

Most hashes have an output that is longer than a checksum. The longest commonly used checksum is CRC-32, which has a 32 bit or 4 byte output. The shortest commonly used hashes are 128 bits or 16 bytes. There are almost 8e28 more potential values for a 128 bit value compared to 32 bits.

Checksums are therefore far more prone to collisions than hashes. It is perfectly possible to find two pieces of data with the same CRC-32, but incredibly difficult to find two pieces of data with the same hash.

Due to the avalanche effect, even a single bit changing in the input should result in a completely different hash. This is different to most checksums, where changes to the input result in predictable changes to the checksum.

As such, hashes tend to be more resistant to certain attacks.

File and Data Identification

A cryptographic hash of a file or data can be used to uniquely identify it. This is often used with software or firmware update files to ensure that the file is as expected. They are also used in computer forensics to ensure that data has not changed.

Digital Signatures

To ensure that data is authentic, we make use of digital signatures. The identity of websites you visit using HTTPS is checked because it is digitally signed by a trusted certificate authority. The software installed on your PC can be signed to ensure it is genuine.

A technical limitation of digital signatures is that they can only operate on short blocks of data. It’s not possible to directly sign an entire software installer.

To sign large amounts of data, the data is first hashed. The hash is then signed instead of the data itself.

If there is a weakness in the underlying hash function, the signature cannot be trusted.

Password hashing

When you log in to most systems, you send the server your password. The server then checks that your password matches the one you have previously provided.

That sounds simple. But user databases are frequently leaked or breached. If the passwords are in the database, they can be read and then used. Because of this, passwords are not stored in their raw form. We hash the passwords instead.

Because it is difficult to determine the password that results in a given hash, or find another password that results in the same hash, the attacker cannot trivially work out what the password is.

There is still a weakness here – if you have the hash, you are free to guess the password. You can guess as many passwords as you like. This is called a brute-force attack.

An ideal cryptographic hash function is fast. This means that calculating the hash for a password would be fast. This allows many guesses to be attempted in a short period of time.

An ideal cryptographic hash function is also deterministic. This means that the same password will result in the same hash. It would be possible to precalculate the hashes for many passwords, and then simply lookup the password that corresponds to a hash.

Because of these issues, specific password hash functions exist. Common ones are bcrypt and PBKDF2.

Firstly, they increase the time taken to perform a hash. They do this by using a normal hash function many times. This makes brute-force attacks slower. The number of times this is done is configurable, allowing a balance between the time taken and resistance to brute-force.

Secondly, a random value called a salt is also input into the hash function alongside the password. This is stored alongside the hash. When the password needs to be checked, the salt is read and used with the password to calculate the hash. This means that the same password will not result in the same hash, making the precalculation of password hashes useless.

Despite these dedicated password hash functions existing, it is still common to find conventional hash functions used. It is around 2 million times faster to calculate an MD5 hash compared to bcrypt. This can be the difference between an attacker working out the password or not.

Password hashing cannot be used when the password needs to be sent to another system. For example, if an IoT lightbulb connects to your WiFi, the WiFi password cannot be stored as a hash inside the device. It must be in a recoverable form to be used to connect.

Common Mistakes

We see a lot of common mistakes made with checksums and hashes. Here are some of them.

Hashes used for Authenticity

As mentioned several times above, if an attacker can modify both the data and hash, it is not possible to detect if the data has been modified. They are not useful for providing data authenticity. This is what digital signatures are for.

It is incredibly common for a hash to be prepended to a firmware file. This does not prevent someone modifying the firmware maliciously.

We’ve even seen a product call a MD5 hash a signature.

Encrypted Checksums used for Authenticity

Data is encrypted to provide confidentiality, not authenticity. It stops someone determining the content of the message. It does not stop them modifying the message.

With most cryptographic algorithms, if you change the encrypted data, it results in random decrypted data.

If we encrypt a simple phrase:

I like cats

d621c9a199d16a93cb5d1586ccdeac29We can then decrypt it:

d621c9a199d16a93cb5d1586ccdeac29

I like catsBut change just one bit in the encrypted data, and we get a totally unrelated output.

d621c9a199d16a93cb5d1586ccdeac28

ó^e.|.."PÃKV.6ÖÌThis doesn’t seem useful to an attacker. But if a CRC-32 is contained inside that encrypted data, you can just provide random encrypted data until you randomly get the right CRC value in the decrypted data. This would, on average, take just over 2 billion attempts – well within the realms of possibility for an automated attack.

The root cause of this is assuming that encryption proves that data is authentic.

Weak Password Hash Functions

Despite the existence of dedicated password hash functions, it is still common to find conventional or weak hash functions used.

MD5 is millions of times faster to calculate than bcrypt. For every single password we can guess with bcrypt, we can try millions with MD5. This is a huge difference.

One of the password hash functions that can be used by Linux systems is a DES-based one, commonly known as descrypt. Whilst this was suitably slow to calculate when it was designed forty years ago, increases in processing power mean that it is not longer considered secure.

Worse still, descrypt only considers the first 8 characters of the password entered. “password” and “password&767%^%46” are handled exactly the same.

The combination of it being fast to brute-force and only supporting 8 character passwords mean that it is viable to exhaustively search all passwords in a reasonable time frame.

Only dedicated and modern password hash functions should be used.

Use of Broken Hash Functions

Due to their heavy use in digital signatures, significant effort is spent analysing the security of hash functions. Weaknesses are found in them, and then they are considered “broken”.

MD5 is very commonly used, but it is possible to find a collision in a few seconds on a laptop. A simple collision like this – two arbitrary inputs that produce the same hash – is not of much use to an attacker. But with some more effort, it is possible to find two related inputs with the same MD5 hash. This has been used in attacks the past – a certificate authority believed they were signing a conventional website certificate, but in reality, they were signing another certificate authority certificate that had the same hash value. More can be read about this here, but it is not particularly easy to follow.

A viable pre-image attack has not been found for MD5. This would allow an attacker to simply generate another message given the value of a hash. This is a much more serious attack.

Regardless, MD5 should be considered broken and no longer used. More to the point, there are more secure alternatives that can be used. No new systems should use insecure hash functions.

This site has a useful table showing how various hash functions have been broken after time. Once a collision is found, it should be considered game over. Cryptographic attacks can only improve over time, not get worse.

Conclusion

Hopefully you’ve learned about some of the uses of checksums and hashes, and the pitfalls surrounding them.